Empowering OpenSSH

Over the last 2+ years, I've done a lot with ssh. Many of the things that I've done have had a DRAMATIC impact in many (approximately 75) peoples day to day lives. That number is as small as it is simply because of the difficult of sharing what I've done with other people. Like many administrators, my day to day life is so full of problems, that it is difficult to make time for a meeting for a seemingly low priority item. So, I've decided to try to consolidate as much of the information as possible here to share.

SSH Keys

If there was just one thing that I'd say has the most impact on all things ssh related, it would have to be ssh keys. Without ssh keys, not much of what I'm about to describe would be possible. Or it would be extremely difficult to do without keys. SSH keys are, in a word, imperative to being able to fully utilize all of ssh's features. SSH keys not only let you automatically log in interactively without being prompted for a password, but they can be used to allow non-interactive processes to automatically log in without being prompted for a password. Further, you can even configure the authorized_keys file on the remote server to automatically execute a configured command, no matter what the client is trying to do. You can even specify where the key file can be used from. So, ssh keys, they are a good thing. If you don't already have a pair of public and private key files, create them now.

For OpenSSH, on most unix distributions, you can simply create the key pair by executing the following command and follow the prompts. Be sure to enter a pass phrase and follow all company security requirements.

ssh-keygen

Now that you have your key pair, make sure that you have an ssh agent running. The ssh agent will ask you for the pass phrase to your private key when you load it. Then any time an ssh client needs to utilize your private key, it will ask the ssh-agent for the information, there by not prompting you for your pass phrase more than once per login session.

Configuration

I'd say the next most important thing to fully utilize ssh is to properly configure it. The ssh config file will allow you to set different options once, so you don't have to think about them again. Options like the port you connect to, or which user name to use for a given system, or what ssh protocol to use to connect, or what tunnels to automatically establish. As you will see later, I'm not talking about a simple configuration file. Ultimately we will get in to complex things that allow you to log into a server multiple levels deep in an environment by typing one ssh command.

This also means that any utilities that rely on ssh will be able to take advantage of the same features. Do you need to scp a 2 GB Solaris patch set to a server that is on the other side of a bastion jump host with 100 MB of free space? With proper config file, you will be able to stream that patch set through the intermediary bastion jump server directly to the target system, never touching any of the free space in the middle.

Automation

Now that we have the ability to automatically log into remote systems in a secure manner we can start to automate things. The ability to automate things is actually where a lot ssh's day to day usability comes from. When done properly, it's possible to run a process on your workstation that loops through all the systems in an environment performing a task. The task can be anything as simple as checking the uptime of the remote system, to as complex as you can imagine. I have a task that I run (almost) monthly that requires me to push an updated file to servers, remotely install the file via sudo, remotely executing the scanner that uses said file via sudo, collecting the results back on my workstation and then finally uploading the results to a central collection server. I run this process in a for loop across my entire environment. As an added bonus, this process runs in the background in a minimized window while I go about other tasks.

All you need to do to execute commands remotely via sudo is make sure that ssh allocates a pseudo-tty, which can easily be done with the "-t" command line flag.

ssh $h rm /tmp/package.tar.gz

scp package.tar.gz ${h}:/tmp/package.tar.gz

ssh $h rm /tmp/package.tar

ssh $h gzip -d /tmp/package.tar.gz

ssh $h rm -Rf /tmp/package

ssh $h tar -x -f /tmp/package

ssh -t $h sudo mv /tmp/package /usr/local/package

ssh -t $h sudo /usr/local/package/scanWrapper.sh

scp ${h}:/usr/local/package/reports/${h}.csv scans/${h}.csv

scp scans/${h}.csv collectionHost:/usr/local/archive/${h}.csv

To make this process easier and more repeatable, I put the above lines in a script and then loop across the environment calling the script.

for h in $(cat hosts.txt); do myScript $h done

Better Automation

You might be thinking to your self, that's an awful lot of connections to be established and torn down, especially when reverse DNS isn't working and connections hang for 30 seconds. (First, go fix reverse DNS.) Second, another of OpenSSH's wonderful abilities saves the day. OpenSSH has the ability to re-use connections. OpenSSH calls this "Master Mode", but I like to think of it as multiplexing. With multiplexing, your first connection does have to spend the time to establish the network connection. However, subsequent connections re-use the connection that the first command established. As a wonderful benefit, the commands execute almost immediately, with no connection time. This means that the above sequence of commands execute at almost the same speed as they would if you were logged in to the remote system and executing them manually.

Proxies

You might be thinking that this sounds all well and good, but how do I interface with something like a SOCKS 5 server that requires authentication. After all, when you skim the ssh man page, you see that OpenSSH (no longer) has the ability to (directly) interface with a SOCKS 5 server, much less one that requires authentication. - This is where OpenSSH's "ProxyCommand" comes in to play. OpenSSH does not natively interface with any type of proxy servers any more. Now, in true unix fashion, OpenSSH relies on an external program to interface with proxies. This means that the same generic interface can be used to interface with an HTTP proxy as a SOCKS 5 proxy that requires authentication. As you will see, we can even use this same mechanism of external process to connect to systems buried in the environment. To interface with an authenticated SOCKS 5 proxy that requires a user name and password, use the external "connect-proxy" command via the ProxyCommand directive.

ProxyCommand

OpenSSH's "ProxyCommand" directive really just uses standard in and standard out to communicate between it's self and the external command. - This use of STDIN / STDOUT communications is key for some of what we will be doing shortly.

We all know that SSH can also carry arbitrary network traffic via tunnels. With tunneling, you can make the administrative web page on the remote server available to the web browser running on your local workstation. Or, you can even expose a process running on your local workstation to a client on the remote system. With proper configuration of the SSH server daemon, you can even allow clients on either end of the connection to interact with the other end. SSH even makes it trivial to forward X11 traffic through the encrypted ssh connection, optionally including X11 authentication. So there is no longer any need to forward X11 traffic through the network unencrypted in parallel to your ssh traffic.

The Config File

With all the things that can be done with SSH, it can be anything between cumbersome to extremely complicated to get everything right on the command line. Thankfully OpenSSH has a convenient client configuration file that is used by ssh, scp and sftp. There is even a system wide configuration file for system administrators to share configuration between multiple users. What's even better is the configuration file is a text file with a relatively simple syntax that is very similar to what is used on the command line.

ssh config file

Host server1.example.org server1 s1 HostName server1.example.org Host server2.example.org server2 s2 HostName 192.168.0.2 Host wwwX.example.com wwwX wX HostName wwwX.example.com Port 2222

hosts file

127.0.0.1 localhost.localdomain localhost 192.168.0.2 server2.example.org server2 s2 192.168.0.2 wwwX.example.com wwwX wX

I do highly recommend that you use host names rather than IPs in your ssh config file because the OpenSSH will store the remote systems public key using the HostName / IP that you are connecting to. In the above example, ssh will store the public key of the remote system as server1.example.org, or server2.example.org, or wwwX.example.com. Thus, when ssh resolves wwwX.example.com to the same IP address as server2.example.org, via DNS or the hosts file, there won't be a conflict. However, if you always connect to IPs, there would be a conflict between server2 and wwwX.

As an added bonus, anything that uses the OpenSSH clients; ssh, scp and sftp, will benefit from the configuration file. This includes such things as rsync, git, Vim's scp:// file format and many more. So taking the time to create the configuration file will pay off in spades, daily.

Let the fun begin!

OpenSSH follows the standard unix model and now relies on external programs to do the job for it. This means that with the proper external program you can interface with an HTTP proxy or a SOCKS proxy or any other program that reads from STDIN and writes to STDOUT. In short, OpenSSH can use just about any unix process to transport traffic.

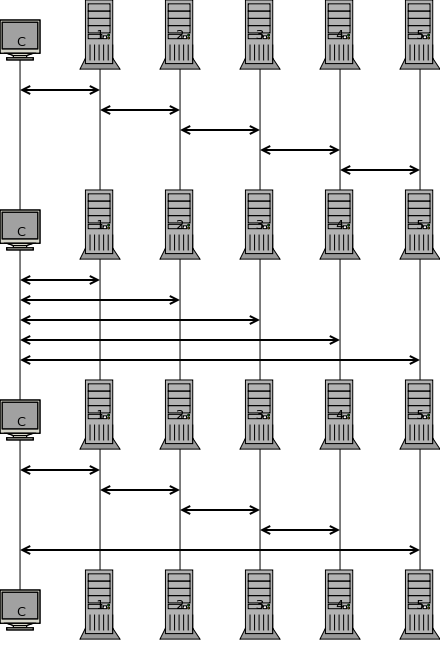

As I eluded to above, OpenSSH does not have to use a network connection to communicate with the remote server. You can use any process to transport the traffic, even something like netcat connecting to a remote system. What's even more interesting is when said netcat process is running remotely on another system. (See image below.) This means you can have ssh connect you to a system that is only accessible through another system, a bastion jump server.

[C] ... [1] ... [2]

Let's say that [C]lient wants to connect to server[2] on the other side of server[1]. We can easily tell the ssh client running on our system that it needs to use a ""proxy to connect to server[2]. However, we aren't going to be using any typical network proxy. Instead, we are going to tell ssh to use the following command as the ""proxy to connect to system[2].

ssh system1 netcat system2 22

You see what's happening there? We are remotely executing netcat on system[1] and telling netcat to connect to port 22 on system[2]. What this does is to bring the output from netcat back to our local [C]lient and plug it in to our ssh command. Said another way, we are telling our ssh client to connect to remote system[2] by remotely executing netcat on system[1].

Picture this

Now, you might be thinking what's the advantage of doing this when I could simply ssh to system[1] and then ssh from there to system[2]. You'd be correct, if all you wanted to do was interact with processes running on system[2]. However, this is essentially the same as us watching the screen of system[1] hooked to a camera pointed at the screen of system[2]. In other words, it's a copy of a copy. While this will work for many interactive things, it is rather limited. You can't scp files from your [C]lient to system[2] without first staging them on system[1]. Nor can you anything that needs to directly communicate with processes running on system[2], like scp, sftp, rsync, git, etc.

It is much better to use the ProxyCommand directive to tell the ssh client how to connect to system[2] and pass the data to ssh running on our [C]lient. When we do use the ProxyCommand directive what is really happening is that the ssh command that we type is spawning a second ssh command in the background that is remotely executing netcat on system[1] to connect system[2]. This background ssh command is feeding the output of netcat running on system[1] to the first ssh command we started on our [C]lient. This means that the two end points of our ssh connection to system[2] are really on our [C]lient -and- system[2]. So, now we effectively have a communications path between our [C]lient and system[2] to establish an ssh connection. Which means that we can now remotely execute commands, use scp, sftp, rsync, git, etc. No more copy of a copy.

SSH Agent

Before we go further, I want to remind you that SSH keys and key agent are imperative from here on out. The ssh agent is being used to authenticate all of the ssh connections. You can rely on user name and password to authenticate to the first one. However, the back grounded remote execution of netcat will fail without key based authentication.

Even More FUN

Now, let's make this a bit more complicated and more like the real world situation that I support daily with one of my clients.

[C] ... [1] ... [2] ... [3]

Yep, I now need to get to system[3] that is behind system[2], passing through two bastion jump hosts to get there. We can easily extend the process we just used to tell ssh that to get to system[3], it has to run a remote netcat on system[2]. Thankfully, ssh is smart enough to know that to be able to get to system[2], it first has to connect to system[1] and run a netcat.

In this case, our ssh command is spawning a second ssh command in the background that is remotely executing netcat on system[2]. However, to be able to do that, our second (back grounded) ssh command has to spawn another back grounded ssh command to run netcat on system[1]. Ultimately our ssh process still manages to get an end-to-end connection between our [C]lient and system[3]. So we can still use our preferred utility to transfer data between [C]lient and system[3]; scp, sftp, rsync, git, vim scp://, etc.

You might be wondering how many levels deep this process can go. In short, the answer is as many as you have bandwidth to go. - Why did I say "bandwidth"? Well, there is a bit of an overhead to the nesting of the connections that we are doing. Mark Krenz w/ Suso Technology Services Inc. - www.suso.com created a video discussing how the deeper you nest the connections, the more overhead you incur. The dangers of SSH tunnel nesting: Generating 200MB of traffic from 1 byte. Before you get too worried about the bandwidth overhead, realize that Mark was testing sixteen levels of nesting. Further alleviating this concern is that I'm about to tell you how to do this with at most one level of nesting. So, the level of overhead to go as many levels deep as you want comes with the fixed overhead of one level of nesting.

Now what?

How do we accomplish as many levels of nesting as we want with the overhead of one level? Simple. We combine the two methods above. We execute a remote command on system[1] that executes a remote command on system[2] that connects to system[3].

ssh system1 ssh system2 netcat system3

Remember, the "daisy chained" connections don't offer all the features that we want and "nested tunnels" run into bandwidth constraints. Fortunately for us, a "chained tunnel" is the best of both worlds and offers a fixed overhead of one nested connection.

See there? We are only executing one back grounded ssh connection on our [C]lient. It's just that that back grounded connection is executing a complex remote command sequence. The real winner of this is that the complex remote command is a daisy chain of remote commands that ultimately establishes a connection between our [C]lient and system[3]. So we once again have all the features we have been talking about with minimal bandwidth overhead, no matter how deep in the environment you have to go.

In case you are wondering, yes, the ssh config file can handle this. I have created a config file that 50 ~ 100 different people use every week day to connect to a client that uses up to six levels of nesting. And it works exactly as I have described. With carefully planing of your ssh config file, you can have this too.

Troubleshooting

So, what if something breaks or simply decides not to work? Don't worry, it's not too complex and it's no difficult to troubleshoot when something doesn't work as expected. (Yes, something will fail to work some day.) All you have to do to troubleshoot connecting to system[3], is run the ssh command with the verbose flag.

ssh -v system3

Look for the ProxyExec line towards the top of the output to find out what system the remote command is being executed on. (Either wait for the command to time out, or cancel it.) Then try sshing to that system. System[2] in our example.

ssh -v system2

Repeat the process all the way back to the beginning until you find the system that you can actually establish a connection with and see what output it is giving you. Usually, in my case, the problem is an expired password on one of the intermediary bastion jump hosts. So, all I have to do is change / renew my password to be able to connect to the end system again. Remember, SSH keys allow us to authenticate to the remote system, but we still have to have an account in good standing with a current, non-expired password.